Micro-Deck

Bridging the Intention-Behavior Gap

A Minimalist Habit Initiation Tool

Team HabitHelp

Evan Neff • Maddie Hutchings • Thomas Chapman • David Schraedel

February 2026

The Intention-Behavior Gap

The Real Problem

- It's not a lack of goals

- It's the 30 seconds of friction between intention and action

- Standard apps harm through guilt or complexity

↓ Press DOWN for customer evidence

Real People, Real Struggles

Thomas' Sister

ADHD, 18-24

"Never ending checklist... will start one, then get distracted and start another"

Marketing Student

User #3

"Alarms fail because I fall back into my routine"

Rugby Player

User #4

"Setting achievable goals. Too many goals at once"

System Understanding

📊 [Image Placeholder]

Cue → Craving → [INITIATION GAP] → Action → Reward

↓ Press DOWN for detailed breakdown

The Habit Loop Components

Traditional Habit Loop:

- Cue: Environmental trigger

- Craving: Desire to act

- 🎯 INITIATION GAP (Our Target)

- Action: Behavior performed

- Reward: Reinforcement

Why This Matters:

- Most apps track after the action

- We intervene before the action

- More effective than tracking after the fact

Based on BJ Fogg's Tiny Habits & Gollwitzer's Implementation Intentions

Execution & Debugging Loop

Phase-by-Phase Implementation

Phase 1 ✓

- Flutter scaffold

- Database (sqflite)

- Data models

Phase 2 ✓

- Welcome screen

- Onboarding flow

- Timer screen

Phase 3 ⚙

- Notifications ✓

- Settings ✓

- Final integrations

Iterative Development Process

- Test → Log → AI Fix → Retest

- Clean Git history with meaningful commits

- Multi-session workflow, not one-shot generation

Falsifiability & The Hypothesis

Core Hypothesis

Key Test

- Showed mockup to 5 potential users

- Asked: "Would this help more than your current method?"

- Result: 3/5 yes, 2/5 unconvinced

↓ Press DOWN for failure modes & pivot story

How We Could Be Wrong

Potential Failure Modes:

- People use notes/calendar instead

- 2 minutes is too long/short

- Phone not available as timer

- Doesn't create lasting habits

Critical Pivot:

One user already uses habitShare for tracking and wouldn't switch.

Result: We doubled down on initiation only, not tracking over time.

Document-Driven Architecture

"We didn't start with code. We started with documents."

📁 /aiDocs Structure

- prd.md (457 lines)

- architecture.md (213 lines)

- mvp.md (273 lines)

- context.md

How It Works

- PRD created before code

- Architecture defines constraints

- AI reads docs → follows rules

- Documents prevent scope creep

↓ Press DOWN for technical constraints

Strict System Constraints

- Flutter 3.22+ with Riverpod state management

- SQLite local storage (sqflite ^2.4.2)

- Offline-only: Zero network requests, no cloud

- No telemetry: No analytics SDKs

- Cross-platform: iOS 16+, Android API 26+

"When AI suggests something outside scope, we point it back to the docs"

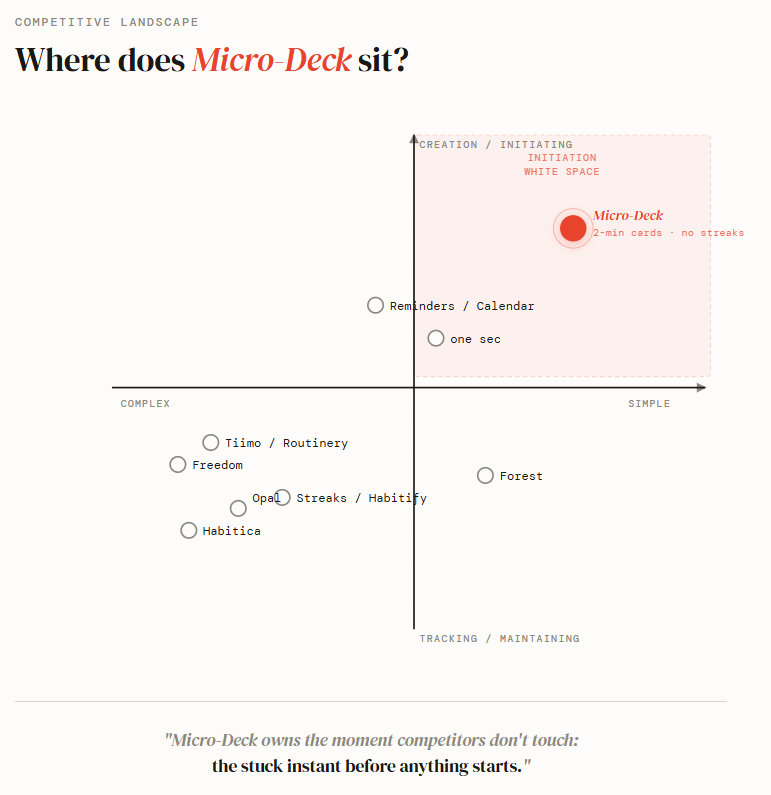

The White Space

Where Competitors Fail:

- Habit trackers (Streaks, Habitify) - After action, guilt-based ($0-10/mo)

- App blockers (Opal $19.99/mo, Freedom $99.50) - Restrictive, police behavior

- Calendars & motivation apps - Lack immediate support

- Focus timers (Forest, Tiimo) - Require already in motion

Micro-Deck's White Space:

Owns the initiation space by helping break down the first step without policing the user

Our Differentiation: No history, no tracking, no guilt. 2-minute sessions, not 25-minute Pomodoros.

Live Demo

📱 [Image Placeholder]

Physical device or screen recording

The Complete Loop

- Onboarding → Timer → Completion

- Haptic feedback: "You started."

- Local persistence (no cloud)

No streak. No score. No guilt.

↓ Press DOWN for detailed flow

Demo Flow Step-by-Step

- Cold Launch → Welcome screen

- Step 1: "What do you want to work toward?" → "Exercise more"

- Step 2: "What's one tiny thing that starts it?" → "Put on running shoes"

- Confirmation: "Let's do two minutes right now"

- Timer Screen: Full-screen countdown, pulsing dot

- Completion: Haptic pulse, "That's it. You started."

- Deck View: Card appears, tap to repeat

- Add Card: Show [+] button functionality

Success Metrics & Next Steps

Success Metrics

- ≥60% activation rate

- ≥25% Day 7 retention (no streaks!)

- ≥80% session completion

- Users say "this feels different"

Failure Indicators

- Onboarding abandonment

- >20% session abandons

- No return without notifications

- "This is just a timer"

Next: Complete Phase 3, CLI tests, behavioral testing with 10+ users, TestFlight beta

↓ Press DOWN for pivot plans

Pivot Plans if Hypotheses Fail

- If initiation works but retention fails: Add gentle follow-up mechanisms (not streaks)

- If users need more guidance: Add AI-assisted card creation

- If 2 minutes is wrong: Test 1-minute and 5-minute defaults

- If positioning unclear: Refine onboarding messaging

"By final presentation, we'll have real user data, not just customer interviews"

Questions & Discussion

All team members ready to answer questions about:

- Technical implementation details

- Customer research methodology

- Competitive positioning

- Success metrics and validation plans

- Process and documentation approach

Backup A: Detailed Customer Research

5 Customer Interviews - Key Insights

User #1 (18-24, ADHD - Thomas' Sister)

"There's just always so many tasks... never ending checklist, then get distracted... will start one task, then get distracted partway through and start another"

"It's difficult for neurodivergent people to build habits... they have to think hard about each step, which is why people like that use apps and reminders"

"Liked that it splits stuff into smaller tasks... curious if it add extra unnecessary steps"

Sister insight: "If her phone is telling her to do stuff she magically ends up on Instagram. She liked that our app was black and white (less distracting)"

User #2 (Existing habit tracker user)

Uses habitShare for end-of-day tracking. Challenge: Wouldn't switch unless we're different.

This feedback made us double down on initiation focus, not tracking.

User #3 (Marketing student)

"I want my goals to change into a habit... It has to be an alarm but I fall back into my routine"

"I like how it has you do it right when you do it... I like how you have to set an action to get started... super simple to navigate"

User #4 & #5

User #4 (Rugby player) main challenge: "Setting achievable goals. Too many goals at once"

Validates our focus on minimal cards, not overwhelming lists.

User #5 uses friends for accountability - wants "active accountability" and "celebrating wins with other people"

Future consideration: Optional social features, but not in v1 (contradicts privacy-first philosophy)

Common Themes

4/5 users

"Starting is harder than continuing"

3/5 users

"Existing apps add complexity instead of reducing it"

2/5 users

"Streak-based systems create guilt, not motivation"

5/5 users

"Phone is both the problem and the solution"

Backup B: Market Research & Target Personas

Market Size

Three Core Personas

People with ADHD/

Executive Dysfunction

15.5M diagnosed U.S. adults (CDC)

Struggle with task initiation despite knowing what to do

Harmed by streak-based guilt mechanics

Digital Burnout

Sufferers

Recognize their phone as a distraction trap

Actively seeking alternatives to doomscrolling

Skeptical of engagement tactics

Productivity

Minimalists

Tried complex systems (Notion, routine apps)

Maintenance overhead defeats the purpose

Want one tool that does one thing well

Full Competitive Landscape (7 Clusters)

| Competitor | Price | Category | Why Users Leave |

|---|---|---|---|

| Opal | $19.99/mo | App blocker | Unreliable blocking, cluttered UI |

| Freedom | $99.50 lifetime | App blocker | Bypass workarounds, support friction |

| one sec | $2.99/mo | Friction tool | Setup friction, annoying by design |

| Forest | Paid | Focus timer | Gamification fatigue, feature bloat |

| Tiimo/Routinery | Various | Routine planner | "Too much system upkeep" |

| Streaks/Habitify | Various | Habit tracker | Streak guilt, shame on bad days |

Identified Risks

- Retention without streaks

- Onboarding friction (need to get to first win quickly)

- iOS notification limits (64-notification queue workaround)

- Monetization (one-time purchase vs. subscription)

Backup C: Behavioral Science Foundation

| Principle | Research Basis | How Micro-Deck Applies It |

|---|---|---|

| Implementation Intentions | Gollwitzer (1999) - "if-then" planning improves follow-through | Card scheduling ("When it's 7am Monday, I will put on running shoes") |

| Minimum Viable Behavior | BJ Fogg's Tiny Habits - anchor to smallest possible version | 2-minute default timer; short enough brain can't argue |

| Autonomy Support | Self-Determination Theory - user-authored goals reduce reactance | User creates all cards; app never assigns tasks |

| Contextual Cueing | Habit loop research (Clear, Wood) - environmental cues drive initiation | Scheduled notifications tied to specific cards and times |

| Completion Signaling | Operant conditioning - clear, immediate feedback reinforces behavior | Haptic pulse on timer completion |

Design Principle: This research is embedded in the experience - not marketed as a feature claim.

Backup D: Data Models & Architecture

Core Data Models (SQLite)

Goal

id: UUID

label: String

createdAt: DateTime

Card

id: UUID

goalId: UUID (nullable - card can exist without goal)

actionLabel: String

durationSeconds: Int (default: 120)

sortOrder: Int

isArchived: Bool

createdAt: DateTime

Schedule (Pro)

id: UUID

cardId: UUID

weekdays: [Int] (0=Sun ... 6=Sat)

timeOfDay: TimeOfDay

isRecurring: Bool

isActive: Bool

Session

id: UUID

cardId: UUID

startedAt: DateTime

completedAt: DateTime (nullable - null = abandoned)

durationSeconds: Int

iOS 64-Notification Limit Workaround

- All schedules stored in Schedule table

- On every app open: cancel all pending notifications, compute next 40 upcoming instances, register those

- On schedule create/edit/delete: trigger immediate recompute

- If notifications denied: schedules remain stored, app remains fully functional

Offline-First: No backend. No user account. No telemetry. Zero network requests in v1.

Git Workflow & Tech Stack

Clean Git History:

- Meaningful commits: "Updates to Phase 3", "Updated architecture, context, prd"

- Clean history showing iterative progress

- .gitignore properly configured (no secrets)

- 5 commits, iterative, not one big commit

Tech Stack:

- Flutter 3.22+ (iOS primary)

- Riverpod state management (^3.2.1)

- SQLite local storage (sqflite ^2.4.2)

- shared_preferences ^2.5.4

- iOS 16+, Android API 26+